Building HasteKit: A LLM Gatway & Agent SDK For Golang From Scratch

HasteKit is an open-source LLM & Agent SDK for Golang, that can also function as an LLM Gateway & No-code agent builder. It’s the outcome of a learning project I worked on for about three months – the longest I’ve ever stayed committed to a side project. This post outlines the implementation details of it.

When the AI hype started shouting, “Build GenAI apps! Build agents!”, I went looking for a place to begin. After some thought, I chose to explore LLMs and agents the hard way – by reading model provider documentation and building everything from scratch.

Why?

- Frameworks tend to hide the interesting nitty-gritty.

- Your creativity gets boxed into whatever the framework designers envisioned.

- And since most of my other projects are built with Go, I looked for something solid in the ecosystem but nothing felt strong or mature enough.

So I started building.

Along the way, I learned a lot: from the fundamentals of invoking models through REST APIs and switching seamlessly between providers, to deeper concepts like observability, agent loops, tools, MCP, conversation history, summarization, memory, skills, sub-agents, handoffs, and durable agent systems.

Building each of these pieces from scratch and seeing how naturally they composed together — was oddly addictive. I kept adding one capability after another.

The result is HasteKit: a small but powerful Go SDK that makes it easy to switch between model providers and build durable, production-ready agents with all the capabilities mentioned above.

The rest of this post dives into the architecture and implementation details of the LLM SDK & LLM Gateway. The Agent SDK and the no-code agent builder deserve their own deep dive – I’ll cover those in a separate post. If you’re curious about how everything works under the hood or you are implementing something on your own project, read on.

Building LLM SDK for Golang & LLM Gateway Server

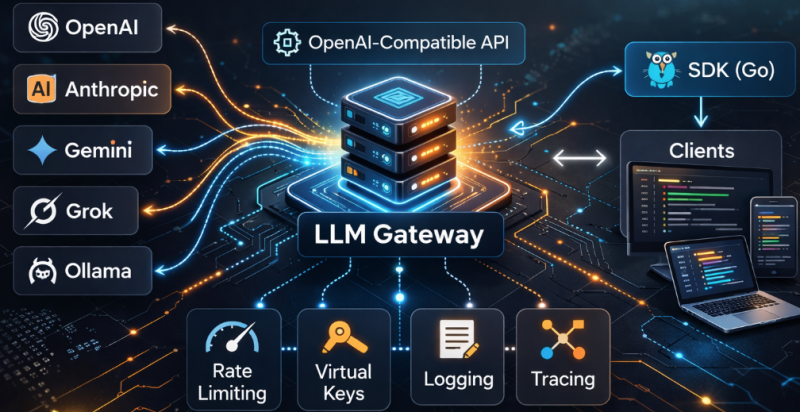

We are covering two things:

1. An LLM SDK – a Go library you can import into your project to make LLM calls across multiple providers using a unified request/response interface.

2. An LLM Gateway Server – a standalone service that implements OpenAI-compatible APIs, allowing existing applications (already using an OpenAI SDK) to switch providers, get usage insights and observability simply by changing the base URL.

Layer 1: Defining The Common Interface

Each model provider exposes slightly different request/response formats, parameter names, streaming mechanisms, and feature flags. To prevent those differences from leaking into application code, we define a provider-agnostic interface.

This layer introduces standardized request and response objects for all core capabilities:

- Text generation

- Image generation

- Text-to-speech

- Speech-to-text

- Embeddings

It also defines a Provider interface that each provider specific implementation must satisfy:

type Provider interface {

NewResponses(ctx context.Context, in *responses.Request) (*responses.Response, error)

NewStreamingResponses(ctx context.Context, in *responses.Request) (chan *responses.ResponseChunk, error)

NewEmbedding(ctx context.Context, in *embeddings.Request) (*embeddings.Response, error)

NewChatCompletion(ctx context.Context, in *chat_completion.Request) (*chat_completion.Response, error)

NewStreamingChatCompletion(ctx context.Context, in *chat_completion.Request) (chan *chat_completion.ResponseChunk, error)

NewSpeech(ctx context.Context, in *speech.Request) (*speech.Response, error)

NewStreamingSpeech(ctx context.Context, in *speech.Request) (chan *speech.ResponseChunk, error)

}Based on the incoming model identifier (e.g., "OpenAI/gpt-4.1" or "Anthropic/claude-haiku-4-5"), we route the request to the appropriate provider.

HasteKit adopts OpenAI’s API format as the common interface, and you can find the source code here: https://github.com/hastekit/hastekit-sdk-go/tree/master/pkg/gateway/llm

Layer 2: Provider Specific Implementations & Translations

This layer implements the Provider interface for each supported model provider. HasteKit currently supports: OpenAI, Gemini, Anthropic, Grok & Ollama. You can find the source code here: https://github.com/hastekit/hastekit-sdk-go/tree/master/pkg/gateway/providers

Each provider client:

- Receives a request in the common format.

- Translates it into the provider-specific payload.

- Calls the provider’s REST API.

- Translates the provider’s response back into the common format.

- For streaming APIs, translation happens incrementally for each chunk received.

Together, Layer 1 + Layer 2 allow applications to:

- Send requests in a consistent structure

- Route them to any supported provider

- Receive responses back in a standardized format

At this point, HasteKit functions as a clean LLM SDK or wrapper. You can interact with multiple providers using an unified interface.

resp, err := client.NewResponses(ctx, &responses.Request{

Model: "OpenAI/gpt-4.1-mini", // Or "Anthropic/claude-haiku-4-5"

Instructions: utils.Ptr("You are a helpful assistant."),

Input: responses.InputUnion{

OfString: utils.Ptr("What is the capital of France?"),

},

})Layer 3: LLM Gateway Server & Reverse Translation Layer

To function as a drop-in replacement for provider-native SDKs, this layer introduces an HTTP server that implements OpenAI & Anthropic compatible endpoints. This allows HasteKit to run as a standalone gateway service, and handle requests made using any OpenAI/Anthropic compatible SDKs.

The request lifecycle looks like this:

- A client sends a request in OpenAI format.

- The server parses it into a common request structure. In HasteKit’s case, it is OpenAI format itself.

- The request is routed to the appropriate provider client based on the model identifier provided, e.g “Anthropic/claude-haiku-4-5”.

- The provider client translates the common request structure into the provider-specific format, which is Anthropic in this case.

- The provider response is translated into the common format.

- The response is translated back into an OpenAI-compatible HTTP response. This is the reverse translation.

While Layer 2 handles translation internally, this layer ensures that existing applications – especially those already built against OpenAI’s or Anthropic’s SDK – can switch to HasteKit simply by changing the base URL. For example:

from openai import OpenAI

client = OpenAI(

base_url="http://localhost:6060/api/gateway/openai",

api_key="sk-hk-your-virtual-key-here",

)

# Use Gemini model

response = client.responses.create(

model="Gemini/gemini-3-flash-preview",

instructions="You are a helpful assistant.",

input="Hello, how are you?",

)This turns HasteKit into:

- A Go SDK (when embedded directly), and

- A drop-in, OpenAI-compatible LLM Gateway (when deployed as a service).

Layer 4: Virtual Keys, Rate Limiting & Observability Using Middlewares

Since every request is first normalized into a common format (Layer 1), HasteKit naturally creates a clean interception point before the request is routed to a provider – and again before the response is translated back into a provider-specific format.

This makes the common interface layer the ideal place to introduce middlewares. Because they operate on a standardized contract, the middlewares remain provider-agnostic and composable. It’s a natural spot to implement cross-cutting concerns such as virtual key management, throttling, and telemetry.

Virtual Key Management

Instead of distributing raw provider API keys, we can now introduce virtual keys.

A virtual key is mapped to one or more provider-specific API keys. External clients receive only the virtual key, never the actual provider credentials.

At the middleware layer, incoming requests are inspected for a virtual key. The system then looks up the corresponding provider key in the database and transparently replaces it before forwarding the request upstream.

This adds an abstraction layer for security, access control, and key rotation – without exposing real provider credentials to end users.

Note: To avoid the latency of querying the database on every request, provider key mappings are cached in memory. These in-memory entries are kept in sync using Postgres Pub/Sub, ensuring updates propagate across gateway instances without adding request-time overhead.

Rate Limiting

Once virtual keys are in place, rate limiting naturally follows.

Rate limits are defined at the virtual key level, allowing you to control usage per client without worrying about the underlying provider keys. This keeps enforcement consistent, regardless of which provider ultimately handles the request.

The rate limiting middleware uses a token bucket algorithm to regulate traffic. To support horizontal scaling, the bucket state is stored in Redis, ensuring limits are enforced correctly even when multiple instances of the gateway are running. This makes throttling distributed, predictable, and provider-agnostic.

Logging & Tracing

With throttling handled, the next cross-cutting concern is visibility.

Logging is kept simple: structured logs go straight to stdout/stderr, so you can rely on whatever log collector you already use (Docker, Kubernetes, Loki, etc.).

For tracing, HasteKit emits OpenTelemetry traces and exports them to ClickHouse using an OTel ClickHouse exporter. This makes it easy to track request latency, provider behavior, and failures end-to-end – especially when you’re running multiple gateway instances.

Extensibility

This middleware layer can be extended to support additional cross-cutting concerns such as – Guardrails, Validation & Policy enforcement.

While these are not currently implemented, the architecture makes it straightforward to introduce them without modifying core provider or translation logic.

With this, we complete the implementation of the core LLM SDK and Gateway server. At this point, HasteKit can:

- Abstract multiple providers behind a common interface

- Run as a drop-in OpenAI-compatible gateway

- Handle virtual keys, rate limiting, and observability

- Scale horizontally with distributed state

And that brings us to the end of this post.

HasteKit started as a learning experiment – an attempt to understand LLMs and agents from first principles. Along the way, it evolved into concrete pieces of infrastructure: LLM & Agent SDK for Golang, LLM Gateway and No-code agent builder, all built from scratch.

If this interests you, I’d encourage you to explore the documentation and browse the source code.